Redis以及Redis的php扩展安装无错版

安装Redis

下载最新的

官网:http://redis.io/ 或者 http://code.google.com/p/redis/downloads/list

第一步:下载安装编译

#wget http://redis.googlecode.com/files/redis-2.4.4.tar.gz

#tar zxvf redis-2.4.4.tar.gz

#cd redis-2.4.4

#make

#make install

#cp redis.conf /etc/

第二步:修改配置

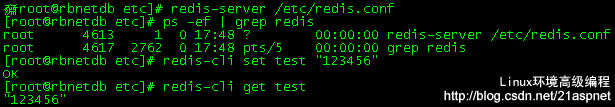

第三步:启动进程

#redis-server /etc/redis.conf

查看进程有没有成功启动

关闭redis

# redis-cli shutdown //关闭所有

关闭某个端口上的redis

# redis-cli -p 6397 shutdown //关闭6397端口的redis

说明:关闭以后缓存数据会自动dump到硬盘上,硬盘地址见redis.conf中的dbfilename dump.rdb

PHP扩展

http://code.google.com/p/php-redis/

# Redis configuration file example# Note on units: when memory size is needed, it is possible to specifiy

# it in the usual form of 1k 5GB 4M and so forth:

#

# 1k => 1000 bytes

# 1kb => 1024 bytes

# 1m => 1000000 bytes

# 1mb => 1024*1024 bytes

# 1g => 1000000000 bytes

# 1gb => 1024*1024*1024 bytes

#

# units are case insensitive so 1GB 1Gb 1gB are all the same.# By default Redis does not run as a daemon. Use 'yes' if you need it.

# Note that Redis will write a pid file in /var/run/redis.pid when daemonized.

daemonize yes# When running daemonized, Redis writes a pid file in /var/run/redis.pid by

# default. You can specify a custom pid file location here.

pidfile /var/run/redis.pid# Accept connections on the specified port, default is 6379.

# If port 0 is specified Redis will not listen on a TCP socket.

port 6379# If you want you can bind a single interface, if the bind option is not

# specified all the interfaces will listen for incoming connections.

#bind 127.0.0.1# Specify the path for the unix socket that will be used to listen for

# incoming connections. There is no default, so Redis will not listen

# on a unix socket when not specified.

#

# unixsocket /tmp/redis.sock

# unixsocketperm 755# Close the connection after a client is idle for N seconds (0 to disable)

timeout 600# Set server verbosity to 'debug'

# it can be one of:

# debug (a lot of information, useful for development/testing)

# verbose (many rarely useful info, but not a mess like the debug level)

# notice (moderately verbose, what you want in production probably)

# warning (only very important / critical messages are logged)

loglevel verbose# Specify the log file name. Also 'stdout' can be used to force

# Redis to log on the standard output. Note that if you use standard

# output for logging but daemonize, logs will be sent to /dev/null

logfile stdout# To enable logging to the system logger, just set 'syslog-enabled' to yes,

# and optionally update the other syslog parameters to suit your needs.

# syslog-enabled no# Specify the syslog identity.

# syslog-ident redis# Specify the syslog facility. Must be USER or between LOCAL0-LOCAL7.

# syslog-facility local0# Set the number of databases. The default database is DB 0, you can select

# a different one on a per-connection basis using SELECT <dbid> where

# dbid is a number between 0 and 'databases'-1

databases 16################################ SNAPSHOTTING #################################

#

# Save the DB on disk:

#

# save <seconds> <changes>

#

# Will save the DB if both the given number of seconds and the given

# number of write operations against the DB occurred.

#

# In the example below the behaviour will be to save:

# after 900 sec (15 min) if at least 1 key changed

# after 300 sec (5 min) if at least 10 keys changed

# after 60 sec if at least 10000 keys changed

#

# Note: you can disable saving at all commenting all the "save" lines.save 900 1

save 300 10

save 60 10000# Compress string objects using LZF when dump .rdb databases?

# For default that's set to 'yes' as it's almost always a win.

# If you want to save some CPU in the saving child set it to 'no' but

# the dataset will likely be bigger if you have compressible values or keys.

rdbcompression yes# The filename where to dump the DB

dbfilename dump.rdb# The working directory.

#

# The DB will be written inside this directory, with the filename specified

# above using the 'dbfilename' configuration directive.

#

# Also the Append Only File will be created inside this directory.

#

# Note that you must specify a directory here, not a file name.

dir /usr/local/redis-2.4.4################################# REPLICATION ################################## Master-Slave replication. Use slaveof to make a Redis instance a copy of

# another Redis server. Note that the configuration is local to the slave

# so for example it is possible to configure the slave to save the DB with a

# different interval, or to listen to another port, and so on.

#

# slaveof <masterip> <masterport># If the master is password protected (using the "requirepass" configuration

# directive below) it is possible to tell the slave to authenticate before

# starting the replication synchronization process, otherwise the master will

# refuse the slave request.

#

# masterauth <master-password># When a slave lost the connection with the master, or when the replication

# is still in progress, the slave can act in two different ways:

#

# 1) if slave-serve-stale-data is set to 'yes' (the default) the slave will

# still reply to client requests, possibly with out of data data, or the

# data set may just be empty if this is the first synchronization.

#

# 2) if slave-serve-stale data is set to 'no' the slave will reply with

# an error "SYNC with master in progress" to all the kind of commands

# but to INFO and SLAVEOF.

#

slave-serve-stale-data yes################################## SECURITY #################################### Require clients to issue AUTH <PASSWORD> before processing any other

# commands. This might be useful in environments in which you do not trust

# others with access to the host running redis-server.

#

# This should stay commented out for backward compatibility and because most

# people do not need auth (e.g. they run their own servers).

#

# Warning: since Redis is pretty fast an outside user can try up to

# 150k passwords per second against a good box. This means that you should

# use a very strong password otherwise it will be very easy to break.

#

# requirepass foobared# Command renaming.

#

# It is possilbe to change the name of dangerous commands in a shared

# environment. For instance the CONFIG command may be renamed into something

# of hard to guess so that it will be still available for internal-use

# tools but not available for general clients.

#

# Example:

#

# rename-command CONFIG b840fc02d524045429941cc15f59e41cb7be6c52

#

# It is also possilbe to completely kill a command renaming it into

# an empty string:

#

# rename-command CONFIG ""################################### LIMITS ##################################### Set the max number of connected clients at the same time. By default there

# is no limit, and it's up to the number of file descriptors the Redis process

# is able to open. The special value '0' means no limits.

# Once the limit is reached Redis will close all the new connections sending

# an error 'max number of clients reached'.

#

# maxclients 128# Don't use more memory than the specified amount of bytes.

# When the memory limit is reached Redis will try to remove keys with an

# EXPIRE set. It will try to start freeing keys that are going to expire

# in little time and preserve keys with a longer time to live.

# Redis will also try to remove objects from free lists if possible.

#

# If all this fails, Redis will start to reply with errors to commands

# that will use more memory, like SET, LPUSH, and so on, and will continue

# to reply to most read-only commands like GET.

#

# WARNING: maxmemory can be a good idea mainly if you want to use Redis as a

# 'state' server or cache, not as a real DB. When Redis is used as a real

# database the memory usage will grow over the weeks, it will be obvious if

# it is going to use too much memory in the long run, and you'll have the time

# to upgrade. With maxmemory after the limit is reached you'll start to get

# errors for write operations, and this may even lead to DB inconsistency.

#

# maxmemory <bytes># MAXMEMORY POLICY: how Redis will select what to remove when maxmemory

# is reached? You can select among five behavior:

#

# volatile-lru -> remove the key with an expire set using an LRU algorithm

# allkeys-lru -> remove any key accordingly to the LRU algorithm

# volatile-random -> remove a random key with an expire set

# allkeys->random -> remove a random key, any key

# volatile-ttl -> remove the key with the nearest expire time (minor TTL)

# noeviction -> don't expire at all, just return an error on write operations

#

# Note: with all the kind of policies, Redis will return an error on write

# operations, when there are not suitable keys for eviction.

#

# At the date of writing this commands are: set setnx setex append

# incr decr rpush lpush rpushx lpushx linsert lset rpoplpush sadd

# sinter sinterstore sunion sunionstore sdiff sdiffstore zadd zincrby

# zunionstore zinterstore hset hsetnx hmset hincrby incrby decrby

# getset mset msetnx exec sort

#

# The default is:

#

# maxmemory-policy volatile-lru# LRU and minimal TTL algorithms are not precise algorithms but approximated

# algorithms (in order to save memory), so you can select as well the sample

# size to check. For instance for default Redis will check three keys and

# pick the one that was used less recently, you can change the sample size

# using the following configuration directive.

#

# maxmemory-samples 3############################## APPEND ONLY MODE ################################ By default Redis asynchronously dumps the dataset on disk. If you can live

# with the idea that the latest records will be lost if something like a crash

# happens this is the preferred way to run Redis. If instead you care a lot

# about your data and don't want to that a single record can get lost you should

# enable the append only mode: when this mode is enabled Redis will append

# every write operation received in the file appendonly.aof. This file will

# be read on startup in order to rebuild the full dataset in memory.

#

# Note that you can have both the async dumps and the append only file if you

# like (you have to comment the "save" statements above to disable the dumps).

# Still if append only mode is enabled Redis will load the data from the

# log file at startup ignoring the dump.rdb file.

#

# IMPORTANT: Check the BGREWRITEAOF to check how to rewrite the append

# log file in background when it gets too big.appendonly yes# The name of the append only file (default: "appendonly.aof")appendfilename appendonly.aof# The fsync() call tells the Operating System to actually write data on disk

# instead to wait for more data in the output buffer. Some OS will really flush

# data on disk, some other OS will just try to do it ASAP.

#

# Redis supports three different modes:

#

# no: don't fsync, just let the OS flush the data when it wants. Faster.

# always: fsync after every write to the append only log . Slow, Safest.

# everysec: fsync only if one second passed since the last fsync. Compromise.

#

# The default is "everysec" that's usually the right compromise between

# speed and data safety. It's up to you to understand if you can relax this to

# "no" that will will let the operating system flush the output buffer when

# it wants, for better performances (but if you can live with the idea of

# some data loss consider the default persistence mode that's snapshotting),

# or on the contrary, use "always" that's very slow but a bit safer than

# everysec.

#

# If unsure, use "everysec".# appendfsync always

appendfsync everysec

# appendfsync no# When the AOF fsync policy is set to always or everysec, and a background

# saving process (a background save or AOF log background rewriting) is

# performing a lot of I/O against the disk, in some Linux configurations

# Redis may block too long on the fsync() call. Note that there is no fix for

# this currently, as even performing fsync in a different thread will block

# our synchronous write(2) call.

#

# In order to mitigate this problem it's possible to use the following option

# that will prevent fsync() from being called in the main process while a

# BGSAVE or BGREWRITEAOF is in progress.

#

# This means that while another child is saving the durability of Redis is

# the same as "appendfsync none", that in pratical terms means that it is

# possible to lost up to 30 seconds of log in the worst scenario (with the

# default Linux settings).

#

# If you have latency problems turn this to "yes". Otherwise leave it as

# "no" that is the safest pick from the point of view of durability.

no-appendfsync-on-rewrite no# Automatic rewrite of the append only file.

# Redis is able to automatically rewrite the log file implicitly calling

# BGREWRITEAOF when the AOF log size will growth by the specified percentage.

#

# This is how it works: Redis remembers the size of the AOF file after the

# latest rewrite (or if no rewrite happened since the restart, the size of

# the AOF at startup is used).

#

# This base size is compared to the current size. If the current size is

# bigger than the specified percentage, the rewrite is triggered. Also

# you need to specify a minimal size for the AOF file to be rewritten, this

# is useful to avoid rewriting the AOF file even if the percentage increase

# is reached but it is still pretty small.

#

# Specify a precentage of zero in order to disable the automatic AOF

# rewrite feature.auto-aof-rewrite-percentage 100

auto-aof-rewrite-min-size 64mb################################## SLOW LOG #################################### The Redis Slow Log is a system to log queries that exceeded a specified

# execution time. The execution time does not include the I/O operations

# like talking with the client, sending the reply and so forth,

# but just the time needed to actually execute the command (this is the only

# stage of command execution where the thread is blocked and can not serve

# other requests in the meantime).

#

# You can configure the slow log with two parameters: one tells Redis

# what is the execution time, in microseconds, to exceed in order for the

# command to get logged, and the other parameter is the length of the

# slow log. When a new command is logged the oldest one is removed from the

# queue of logged commands.# The following time is expressed in microseconds, so 1000000 is equivalent

# to one second. Note that a negative number disables the slow log, while

# a value of zero forces the logging of every command.

slowlog-log-slower-than 10000# There is no limit to this length. Just be aware that it will consume memory.

# You can reclaim memory used by the slow log with SLOWLOG RESET.

slowlog-max-len 1024################################ VIRTUAL MEMORY ################################## WARNING! Virtual Memory is deprecated in Redis 2.4

### The use of Virtual Memory is strongly discouraged.# Virtual Memory allows Redis to work with datasets bigger than the actual

# amount of RAM needed to hold the whole dataset in memory.

# In order to do so very used keys are taken in memory while the other keys

# are swapped into a swap file, similarly to what operating systems do

# with memory pages.

#

# To enable VM just set 'vm-enabled' to yes, and set the following three

# VM parameters accordingly to your needs.vm-enabled no#vm-enabled yes# This is the path of the Redis swap file. As you can guess, swap files

# can't be shared by different Redis instances, so make sure to use a swap

# file for every redis process you are running. Redis will complain if the

# swap file is already in use.

#

# The best kind of storage for the Redis swap file (that's accessed at random)

# is a Solid State Disk (SSD).

#

# *** WARNING *** if you are using a shared hosting the default of putting

# the swap file under /tmp is not secure. Create a dir with access granted

# only to Redis user and configure Redis to create the swap file there.

vm-swap-file /tmp/redis.swap# vm-max-memory configures the VM to use at max the specified amount of

# RAM. Everything that deos not fit will be swapped on disk *if* possible, that

# is, if there is still enough contiguous space in the swap file.

#

# With vm-max-memory 0 the system will swap everything it can. Not a good

# default, just specify the max amount of RAM you can in bytes, but it's

# better to leave some margin. For instance specify an amount of RAM

# that's more or less between 60 and 80% of your free RAM.

vm-max-memory 0# Redis swap files is split into pages. An object can be saved using multiple

# contiguous pages, but pages can't be shared between different objects.

# So if your page is too big, small objects swapped out on disk will waste

# a lot of space. If you page is too small, there is less space in the swap

# file (assuming you configured the same number of total swap file pages).

#

# If you use a lot of small objects, use a page size of 64 or 32 bytes.

# If you use a lot of big objects, use a bigger page size.

# If unsure, use the default :)

vm-page-size 32# Number of total memory pages in the swap file.

# Given that the page table (a bitmap of free/used pages) is taken in memory,

# every 8 pages on disk will consume 1 byte of RAM.

#

# The total swap size is vm-page-size * vm-pages

#

# With the default of 32-bytes memory pages and 134217728 pages Redis will

# use a 4 GB swap file, that will use 16 MB of RAM for the page table.

#

# It's better to use the smallest acceptable value for your application,

# but the default is large in order to work in most conditions.

vm-pages 134217728# Max number of VM I/O threads running at the same time.

# This threads are used to read/write data from/to swap file, since they

# also encode and decode objects from disk to memory or the reverse, a bigger

# number of threads can help with big objects even if they can't help with

# I/O itself as the physical device may not be able to couple with many

# reads/writes operations at the same time.

#

# The special value of 0 turn off threaded I/O and enables the blocking

# Virtual Memory implementation.

vm-max-threads 4############################### ADVANCED CONFIG ################################ Hashes are encoded in a special way (much more memory efficient) when they

# have at max a given numer of elements, and the biggest element does not

# exceed a given threshold. You can configure this limits with the following

# configuration directives.

hash-max-zipmap-entries 512

hash-max-zipmap-value 64# Similarly to hashes, small lists are also encoded in a special way in order

# to save a lot of space. The special representation is only used when

# you are under the following limits:

list-max-ziplist-entries 512

list-max-ziplist-value 64# Sets have a special encoding in just one case: when a set is composed

# of just strings that happens to be integers in radix 10 in the range

# of 64 bit signed integers.

# The following configuration setting sets the limit in the size of the

# set in order to use this special memory saving encoding.

set-max-intset-entries 512# Similarly to hashes and lists, sorted sets are also specially encoded in

# order to save a lot of space. This encoding is only used when the length and

# elements of a sorted set are below the following limits:

zset-max-ziplist-entries 128

zset-max-ziplist-value 64# Active rehashing uses 1 millisecond every 100 milliseconds of CPU time in

# order to help rehashing the main Redis hash table (the one mapping top-level

# keys to values). The hash table implementation redis uses (see dict.c)

# performs a lazy rehashing: the more operation you run into an hash table

# that is rhashing, the more rehashing "steps" are performed, so if the

# server is idle the rehashing is never complete and some more memory is used

# by the hash table.

#

# The default is to use this millisecond 10 times every second in order to

# active rehashing the main dictionaries, freeing memory when possible.

#

# If unsure:

# use "activerehashing no" if you have hard latency requirements and it is

# not a good thing in your environment that Redis can reply form time to time

# to queries with 2 milliseconds delay.

#

# use "activerehashing yes" if you don't have such hard requirements but

# want to free memory asap when possible.

activerehashing yes################################## INCLUDES #################################### Include one or more other config files here. This is useful if you

# have a standard template that goes to all redis server but also need

# to customize a few per-server settings. Include files can include

# other files, so use this wisely.

#

# include /path/to/local.conf

# include /path/to/other.conf

中文说明:

1,是否以后台进程运行,默认为no

daemonize no

2,如以后台进程运行,则需指定一个pid,默认为/var/run/redis.pid

pidfile /var/run/redis.pid

3,监听端口,默认为6379

port 6379

4,绑定主机IP,默认值为127.0.0.1(注释)

bind 127.0.0.1

5,超时时间,默认为300(秒)

timeout 300

6,日志记录等级,有4个可选值,debug,verbose(默认值),notice,warning

loglevel verbose

7,日志记录方式,默认值为stdout

logfile stdout

8,可用数据库数,默认值为16,默认数据库为0

databases 16

9,指出在多长时间内,有多少次更新操作,就将数据同步到数据文件。这个可以多个条件配合,比如默认配置文件中的设置,就设置了三个条件。

900秒(15分钟)内至少有1个key被改变

save 900 1

300秒(5分钟)内至少有10个key被改变

save 300 10

10,存储至本地数据库时是否压缩数据,默认为yes

rdbcompression yes

11,本地数据库文件名,默认值为dump.rdb

dbfilename /root/redis_db/dump.rdb

12,本地数据库存放路径,默认值为 ./

dir /root/redis_db/

13,当本机为从服务时,设置主服务的IP及端口(注释)

slaveof <masterip> <masterport>

14,当本机为从服务时,设置主服务的连接密码(注释)

masterauth <master-password>

15,连接密码(注释)

requirepass foobared

16,最大客户端连接数,默认不限制(注释)

maxclients 128

17,设置最大内存,达到最大内存设置后,Redis会先尝试清除已到期或即将到期的Key,当此方法处理后,任到达最大内存设置,将无法再进行写入操作。(注释)

maxmemory <bytes>

18,是否在每次更新操作后进行日志记录,如果不开启,可能会在断电时导致一段时间内的数据丢失。因为redis本身同步数据文件是按上面save条件来同步的,所以有的数据会在一段时间内只存在于内存中。默认值为no

appendonly yes

19,更新日志文件名,默认值为appendonly.aof(注释)

appendfilename /root/redis_db/appendonly.aof

20,更新日志条件,共有3个可选值。no表示等操作系统进行数据缓存同步到磁盘,always表示每次更新操作后手动调用fsync()将数据写到磁盘,everysec表示每秒同步一次(默认值)。

appendfsync everysec

21,是否使用虚拟内存,默认值为no

vm-enabled yes

22,虚拟内存文件路径,默认值为/tmp/redis.swap,不可多个Redis实例共享

vm-swap-file /tmp/redis.swap

23,将所有大于vm-max-memory的数据存入虚拟内存,无论vm-max-memory设置多小,所有索引数据都是内存存储的 (Redis的索引数据就是keys),也就是说,当vm-max-memory设置为0的时候,其实是所有value都存在于磁盘。默认值为0。

vm-max-memory 0

24,虚拟内存文件以块存储,每块32bytes

vm-page-size 32

25,虚拟内在文件的最大数

vm-pages 134217728

26,可以设置访问swap文件的线程数,设置最好不要超过机器的核数,如果设置为0,那么所有对swap文件的操作都是串行的.可能会造成比较长时间的延迟,但是对数据完整性有很好的保证.

vm-max-threads 4

27,把小的输出缓存放在一起,以便能够在一个TCP packet中为客户端发送多个响应,具体原理和真实效果我不是很清楚。所以根据注释,你不是很确定的时候就设置成yes

glueoutputbuf yes

28,在redis 2.0中引入了hash数据结构。当hash中包含超过指定元素个数并且最大的元素没有超过临界时,hash将以一种特殊的编码方式(大大减少内存使用)来存储,这里可以设置这两个临界值

hash-max-zipmap-entries 64

29,hash中一个元素的最大值

hash-max-zipmap-value 512

30,开启之后,redis将在每100毫秒时使用1毫秒的CPU时间来对redis的hash表进行重新hash,可以降低内存的使用。当你的使 用场景中,有非常严格的实时性需要,不能够接受Redis时不时的对请求有2毫秒的延迟的话,把这项配置为no。如果没有这么严格的实时性要求,可以设置 为yes,以便能够尽可能快的释放内存

activerehashing yes

Redis的部署使用文档 http://www.elain.org/?p=505

========================================================

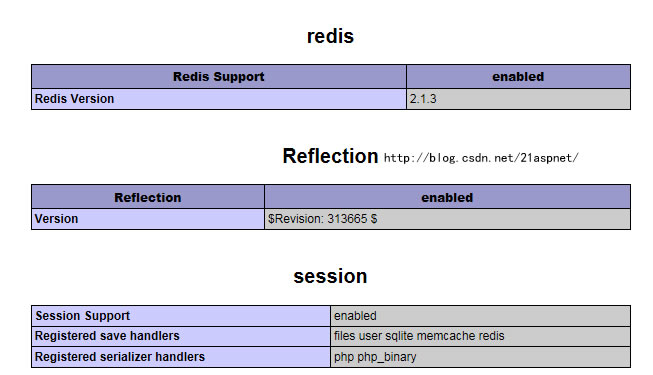

安装PHP的Redis扩展

先去下载https://github.com/nicolasff/phpredis/downloads

#wget https://github.com/nicolasff/phpredis/downloads

# tar -zxvf nicolasff-phpredis-2.1.3-124-gd4ad907.tar.gz

# mv nicolasff-phpredis-d4ad907 php-5.3.8/ext/phpredis/

# cd php-5.3.8/ext/phpredis/

# /usr/local/php/bin/phpize

# ./configure --with-php-config=/usr/local/php/bin/php-config

# make && make install

配置php.ini

vi /usr/local/php/lib/php.ini

(加入:

extension=redis.so

)

先要看看有没有extension_dir=/…….

重启apache或者nginx

# /usr/local/apache2/bin/apachectl restart

测试代码:

<?php$redis = new Redis();$redis->connect('127.0.0.1',6379);$redis->set('test','hello world!');echo $redis->get('test');?>参考:

Linux(CentOS 5.5) Redis 安装及RedisPHP拓展安装应用

http://www.linuxidc.com/Linux/2011-08/41404.htm

安装redis和phpredis模块

http://skandgjxa.blog.163.com/blog/static/14152982011712112933816/

RHEL5下编译安装Redis及其PHP扩展库

http://hi.baidu.com/zjstandup/blog/item/9f38b825d379c96c35a80f7f.html

相关文章:

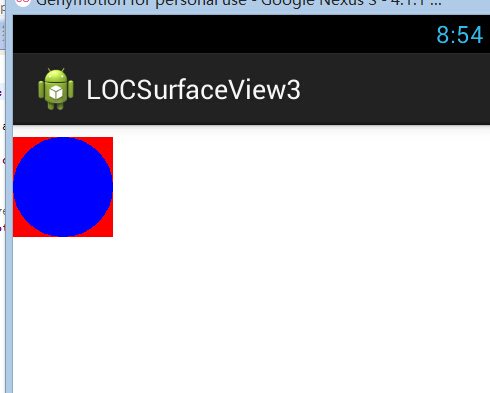

Android UI SurfaceView的使用-绘制组合图型,并使其移动

绘制容器类: //图形绘制容器 public class Contanier {private List<Contanier> list;private float x0,y0;public Contanier(){listnew ArrayList<Contanier>();}public void draw(Canvas canvas){canvas.save();canvas.translate(getX(), getY());chi…

新型混合共识机制及抗量子特性的 Hcash 主链测试链即将上线

由上海交通大学密码与计算机安全实验室(LoCCS)及上海观源信息科技有限公司负责研发的、具有新型混合共识机制及抗量子特性的 Hcash 主链代码已完成并在 2017 年 12 月18 日之前上传至github: https://github.com/HcashOrg/hcashd https://git…

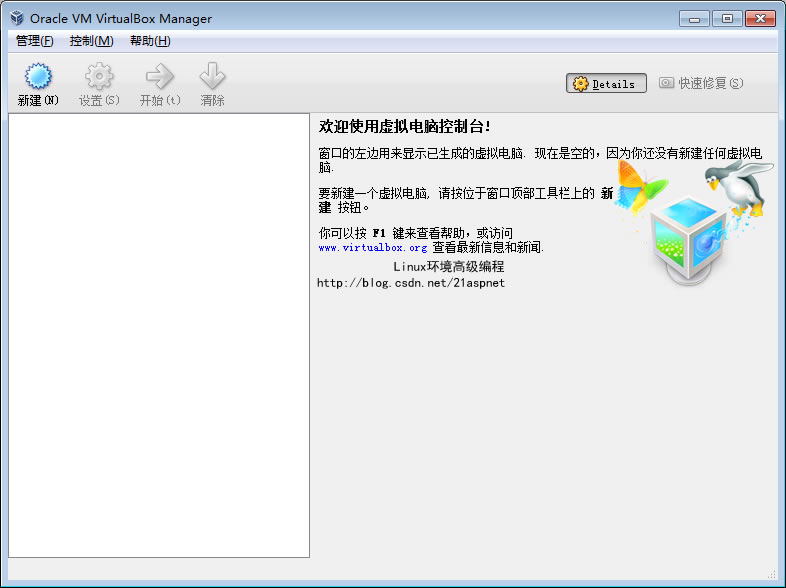

CentOS 6虚拟机安装

这篇博客已经被合并到这里了: 虚拟机安装CentOS以及SecureCRT设置【完美无错版】 下面不用看了,看上面即可 1.下载虚拟机Oracle VM VirtualBox最新的下载地址: http://download.virtualbox.org/virtualbox/4.1.6/VirtualBox-4.1.6-74713-Win…

开发中新游戏《庞加莱》

三体题材的游戏,表现三体人在三体星上生活和冒险。收集水和物器,躲避火焰与巨日,探索遗迹并与巨型生物战斗。温度会因太阳位置不同而发生变化,进而对环境产生一定影响。 游戏开发中。 ---- 2017-4-27版视频: http://v.…

介绍一个打怪升级练习 Python 的网站,寓教于乐~

作者 | 周萝卜来源 | 萝卜大杂烩这是一个学习 Python 的趣味网站,通过关卡的形式来锻炼 Python 水平。一共有 33 关,每一关都需要利用 Python 知识解题找到答案,然后进入下一关。很考验对 Python 的综合掌握能力,比如有的闯关需要…

hive基本操作与应用

通过hadoop上的hive完成WordCount 启动hadoop ssh localhost cd /usr/local/hadoop ./sbin/start-dfs.sh cd /usr/local/hive/lib service mysql start start-all.sh Hdfs上创建文件夹 hdfs dfs -mkdir test1 hdfs dfs -ls /user/hadoop 上传文件至hdfs hdfs dfs -put ./try.tx…

PHP源代码分析-字符串搜索系列函数实现详解

今天和同事在讨论关键字过虑的算法实现,前几天刚看过布隆过滤算法,于是就想起我们公司内部的查找关键字程序,好奇是怎么实现的。于是查找了一下源代码,原来可以简单地用stripos函数查找, stripos原型如下: …

麻省理工研究:深度图像分类器,居然还会过度解读

作者 | 青苹果来源 | 数据实战派某些情况下,深度学习方法能识别出一些在人类看来毫无意义的图像,而这些图像恰恰也是医疗和自动驾驶决策的潜在隐患所在。换句话说,深度图像分类器可以使用图像的边界,而非对象本身,以超…

Oracle 查询转换之子查询展开

概念:子查询展开(Subquery Unnesting)是优化器处理带子查询的目标sql的一种优化手段,它是指优化器不再将目标sql中子查询当作一个独立的处理单元来单独执行,而是将该子查询转换为它自身和外部查询之间等价的表连接。这种等价连接转…

Xcode中通过删除原先版本的程序来复位App

可以在Xcode菜单中点击 Product->Clean Build Folder (按住Option键,在windows键盘中是Alt键.) 此时Xcode将会从设备中删除(卸载uninstall)任何该app之前部署的版本. 接下来重启Xcode,再试一下,有时这可以修复非常奇怪(really weird)的问题.

深入理解PHP之OpCode

OpCode是一种PHP脚本编译后的中间语言,就像Java的ByteCode,或者.NET的MSL。 此文主要基于《 Understanding OPcode》和 网络,根据个人的理解和修改,特记录下来 :PHP代码: <?phpecho "Hello World";$a 1…

关于 AIOps 的过去与未来,微软亚洲研究院给我们讲了这些故事

作者 | 贾凯强出品 | AI科技大本营(ID:rgznai100)在过去的15年里,云计算实现了飞速发展,而这种发展也为诸多的前沿技术奠定了基础,AIOps便在此环境中获得了良好的发展契机。在数字化转型的浪潮下,云计算已经…

JS 正则表达式 0.001 ~99.999

^(0|[1-9][0-9]?)(\.[0-9]{0,2}[1-9])?$转载于:https://www.cnblogs.com/wahaha603/p/9050130.html

深入浅出PHP(Exploring PHP)

一直以来,横观国内的PHP现状,很少有专门介绍PHP内部机制的书。呵呵,我会随时记录下研究的心得,有机会的时候,汇总成书。:) 今天这篇,我内心是想打算做为一个导论: PHP是一个被广泛应用的脚本语言…

懒人神器 !一个创意十足的 Python 命令行工具

作者 | 写代码的明哥来源 | Python编程时光当听到某些人说 xx 库非常好用的时候,我们总是忍不住想要去亲自试试。有一些库,之所以好用,是对一些库做了更高级的封闭,你装了这个库,就会附带装了 n 多依赖库,就…

Regular Expression Matching

正则匹配 Regular Expression Matching Implement regular expression matching with support for . and *. . Matches any single character. * Matches zero or more of the preceding element.The matching should cover the entire input string (not partial).The functio…

PI校正环节的程序实现推导过程

PI校正环节在经典控制论中非常有用,特别是对负反馈控制系统,基本上都有PI校正环节。1.下面分别说明比例环节和积分环节的作用,以阶跃信号为例。①比例环节单独作用以上分析说明,若只有比例环节的控制系统,阶跃响应也是…

几行 Python 代码实现邮件解析,超赞~

作者 | Yunlor来源 | CSDN博客前言如何通过python实现邮件解析?邮件的格式十分复杂,主要是mime协议,本文主要是从实现出发,具体原理可以自行研究。一、安装通过mailgun开源的Flanker库实现邮件解析。该库包含了邮件地址解析和邮件…

深入理解PHP原理之变量(Variables inside PHP)

或许你知道,或许你不知道,PHP是一个弱类型,动态的脚本语言。所谓弱类型,就是说PHP并不严格验证变量类型(严格来讲,PHP是一个中强类型语言,这部分内容会在以后的文章中叙述),在申明一个变量的时候࿰…

jQuery中的.height()、.innerHeight()和.outerHeight()

jQuery中的.height()、.innerHeight()和.outerHeight()和W3C的盒模型相关的几个获取元素尺寸的方法。对应的宽度获取方法分别为.width()、.innerWidth()和.outerWidth(),在此不详述。1. .height()获取匹配元素集合中的第一个元素的当前计算高度值 或 设置每一个匹配…

Python实战之logging模块使用详解

用Python写代码的时候,在想看的地方写个print xx 就能在控制台上显示打印信息,这样子就能知道它是什么了,但是当我需要看大量的地方或者在一个文件中查看的时候,这时候print就不大方便了,所以Python引入了logging模块来…

深入理解PHP原理之变量作用域

作者:laruence(http://www.laruence.com/)地址: http://www.laruence.com/2008/08/26/463.html PHP变量的内部表示是如何和用户脚本中的变量联系起来的呢?也就是说,如果我在脚本中写下:<?php $var"laruen…

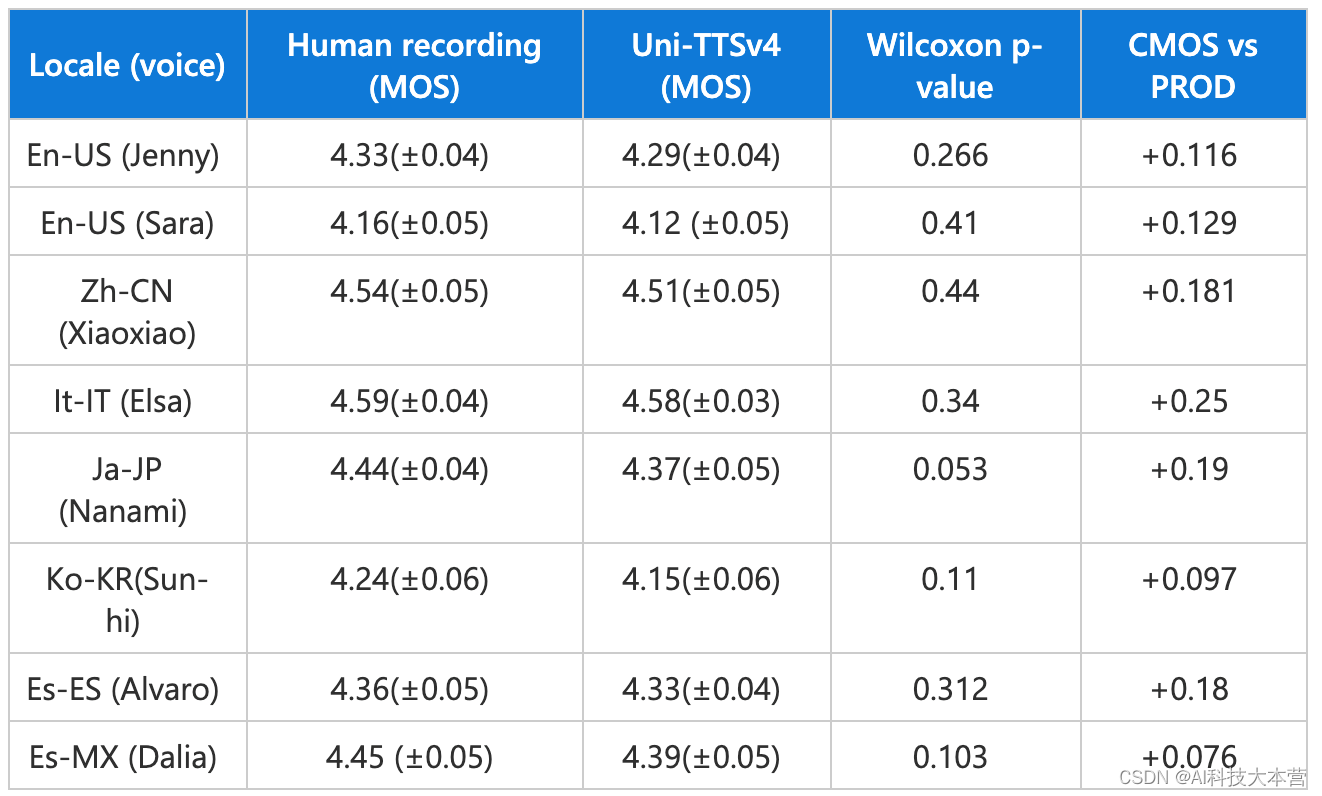

Azure AI的又一里程碑,Neural TTS新模型呈现真人般情感饱满的AI语音

在人与人之间的对话中,即使是同样的字句,也会因为所处情景和情感的不同而表现出丰富的抑扬顿挫,而这种动态性恰恰是各种AI合成语音的“软肋”。相比于人类讲话时丰富多变的语气,AI语音的“心平气和”往往给人带来明显的违和感。 …

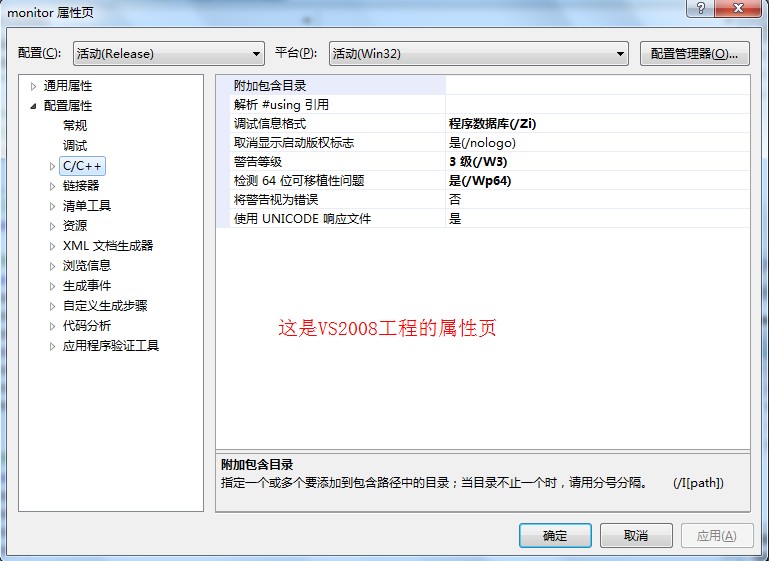

VS2010中“工具选项中的VC++目录编辑功能已被否决”解决方法

http://blog.csdn.net/chaijunkun/article/details/6658923 这是VS2010的改变,不能够在“工具-选项”中看到“VC目录”了。 但是呢,我们可以在另外一个地方找到它,请看下边的对比照片。 VS2008中: VS2010中: 打开方式非…

Bminer 7.0.0 ETH挖矿教程(Linux 64)

Bminer产品介绍Bminer是目前最快的挖矿程序,Bminer是基于NVIDIA GPU深度优化的挖矿软件。Bminer支持Equihash和Ethash两种算法的虚拟币,包括:ETH(以太坊),ETC,ZEC(零币),…

深入理解PHP原理之变量分离/引用(Variables Separation)

引自: http://www.laruence.com/ [风雪之隅 ]在前面的文章中我已经介绍了PHP的变量的内部表示(深入理解PHP原理之变量(Variables inside PHP)),以及PHP中作用域的实现机制(深入理解PHP原理之变量作用域(Scope inside PHP))。这节我们就接着前面的文章,继…

C# 属性、索引

属性(property): public string Name {get{return _name;}set{_name value;} } 简写为: public string Name { set; get;} 索引器(index): 索引器为C#程序语言中泪的一种成员,它是的对象可…

分享几段祖传的 Python 代码,拿来直接使用!

作者 | 周萝卜来源 | 萝卜大杂烩今天分享几段工作生活中常用的代码,都是最为基础的功能和操作,而且大多还都是出现频率比较高的,很多都是可以拿来直接使用或者简单修改就可以放到自己的项目当中日期生成很多时候我们需要批量生成日期…

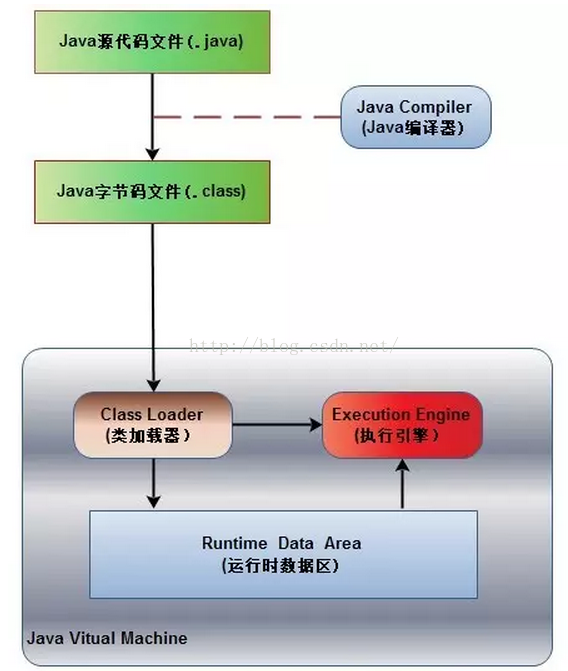

JVM——Java虚拟机架构

Java虚拟机(Java virtualmachine)实现了Java语言最重要的特征:即平台无关性。 平台无关性原理:编译后的 Java程序(.class文件)由 JVM执行。JVM屏蔽了与具体平台相关的信息,使程序可以在多种平台…

深入理解PHP之数组遍历

本文地址: http://www.laruence.com/2009/08/23/1065.html 经常会有人问我, PHP的数组, 如果用foreach来访问, 遍历的顺序是固定的么? 以什么顺序遍历呢? 比如: <?php$arr[laruence] huixinchen;$arr[yahoo] 2007;$arr[baidu] 2008;foreach ($arr as $key >…